LLMs like ChatGPT gained popularity due to their ability to provide a conversational experience.

But isn’t that the same with search engines?

Let’s take a look at how LLMs stack up against search engines:

Operations

LLMs are trained on massive amounts of data to understand complex text, recognize patterns, and generate human-like text.

Whereas, search engines like Google are programmed to retrieve existing data from the web.

While relevant results are a highlight, search engines before the rise of LLMs primarily relied on keyword matching, focusing on pages containing the exact query terms.

LLMs delve deeper, understanding the context and intent behind a query. Based on extensive knowledge bases, their responses often provide more nuanced and contextually relevant answers.

Knowledge Sources

Search engines continuously crawl and index the web to find and organize the latest information on various subjects.

In contrast, Large Language Models (LLMs) are trained on extensive datasets compiled from various historical and contextual sources. Their extensive knowledge base enables them to understand and interpret a broad spectrum of topics and generate contextually relevant and original content.

Despite lacking real-time internet access for updates, LLMs provide in-depth, nuanced insights from their training, often surpassing the need for the most current data.

However, recent advancements in LLMs, such as GPT-4, have introduced limited web browsing capabilities. This feature allows these models to pull in and utilize up-to-date information from the web within specific operational constraints.

Interactions & Output Quality

LLMs are designed for conversational interactions, facilitating dynamic, back-and-forth dialogues. Engines like Google primarily present a one-way interplay with hyperlinks or references to external sources.

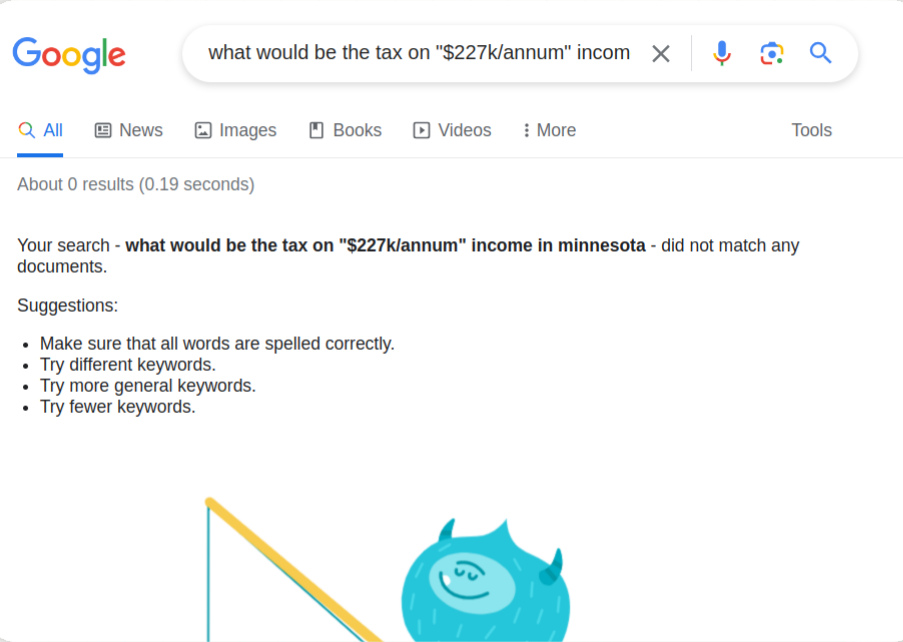

You enter a question, and the search engine gives links to related results. The more specific the question, the narrower the results:

Want to know your income tax for a particular state in the US?

Here are the results from Google:

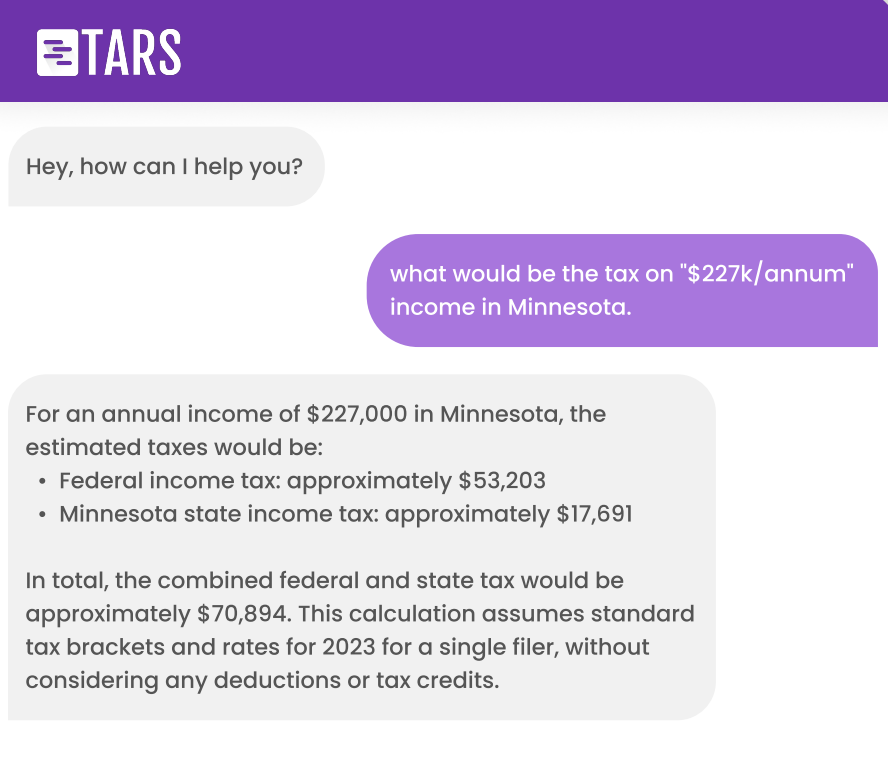

Whereas LLMs can perform calculations and generate a personalized response for you:

Unlike search engines, which essentially spread a list of sources, LLMs can present detailed solutions for in-depth analysis or various context-aware viewpoints.

Wrapping Up

LLMs distinctly enhance and go beyond what traditional search engines offer. They are an integral aspect of sophisticated AI solutions across all major sectors.

Digital leaders and CXO are already employing LLMs to improve CX for their businesses. These models efficiently handle routine inquiries, significantly easing agent workload for customer support teams.

At TARS, we help businesses tackle complex workflows with our expertise in enterprise customer support automation.

Book a free AI consultation with us.

Vyshnav is a writer and editor who’s been creating written and multimedia marketing content for agencies and businesses since 2018. His work spans multiple industries, but has ultimately led him to focus on conversational AI as a core niche.

Vyshnav writes and manages different types of content for TARS, particularly in automation & customer experience domains for BFSI.

In his spare time, you can either find him busy with ancient scriptures or trekking across the western ghats.

0 Comments on "Are LLMs Just Hyped Search Engines?"